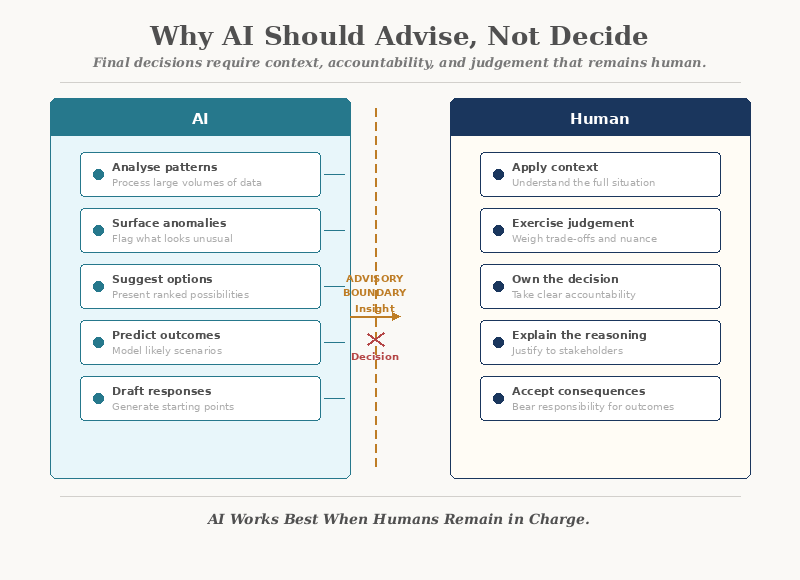

AI is powerful at pattern recognition and data synthesis. But final decisions require context, accountability, and judgement that remains human.

What AI Does Well

AI has genuine strengths. When applied within a clear system, it can:

- Identify patterns across large volumes of data

- Surface anomalies and risks that humans might miss

- Generate options, summaries, and recommendations quickly

- Reduce the time between question and insight

These capabilities are real. They save time and improve awareness.

But capability is not the same as authority.

The Difference Between Advising and Deciding

The distinction matters more than most organisations realise.

When AI advises:

- It presents information

- It highlights options

- It flags risks

- A human applies judgement

When AI decides:

- It acts on probabilities

- It bypasses context

- It cannot weigh values

- No one owns the outcome

An AI system can tell you that a customer is likely to churn. It cannot tell you whether the relationship is worth saving, what the history looks like, or what a fair response might be.

That requires judgement — and judgement is always contextual.

Why Organisations Let AI Decide (Without Meaning To)

Most organisations do not consciously hand decision-making to AI. It happens gradually:

- A recommendation engine starts suggesting actions

- People begin following suggestions without question

- Over time, the suggestion becomes the default

- Eventually, overriding the AI feels like going against "the system"

This is not a technology failure. It is a governance gap.

What AI Cannot Do

Despite its capabilities, AI does not:

- Understand why a rule exists

- Weigh competing values or priorities

- Recognise when an exception is the right call

- Take responsibility for outcomes

- Explain its reasoning in terms people trust

AI operates on patterns. Decisions operate on principles.

A pattern can suggest what usually happens. A decision must account for what should happen.

The Accountability Problem

When an AI system makes or strongly influences a decision, accountability becomes unclear:

In regulated environments, public-facing services, or anywhere trust matters, these questions are not theoretical. They are operational.

If no one can explain why a decision was made, the organisation carries unmanaged risk.

The Danger of Over-Trust

When AI systems produce polished, confident-sounding outputs, people tend to defer to them. This creates a subtle but serious problem:

- Critical thinking diminishes

- Human skills atrophy over time

- Errors pass unquestioned because "the system said so"

- Organisational learning slows

The more an AI appears authoritative, the less likely people are to challenge it — even when they should.

Confidence in output is not the same as correctness of output.

Where AI Adds the Most Value

AI is most effective when it is positioned as a tool for insight, not a substitute for thinking.

In a well-designed system, AI can:

- Surface trends and patterns that inform strategy

- Draft responses and summaries for human review

- Flag exceptions and risks for human attention

- Reduce data processing time so people can focus on judgement

- Support decisions — without replacing them

This is not a limitation. It is responsible design.

Designing the Human-AI Boundary

Organisations that use AI well are deliberate about where the boundary sits between machine intelligence and human authority.

That means defining:

Without these boundaries, AI drifts from advisor to decision-maker — and accountability drifts with it.

AI Needs a Clear System Underneath

AI does not create clarity. It amplifies whatever is already there.

In a clear system, AI:

- Enhances decision quality

- Accelerates insight

- Supports accountability

In an unclear system, AI:

- Reinforces existing biases

- Produces misleading confidence

- Makes errors harder to trace

This is why the foundation matters. Before applying AI, organisations need to understand their system — the workflows, the decision points, the ownership, and the feedback loops.

AI applied to a visible, well-understood system creates leverage. AI applied to an invisible one creates risk.

The Right Role for AI in Organisations

The most effective organisations treat AI as:

Notice what is absent from this list: decision-maker.

That role belongs to people — supported by systems, informed by data, and accountable for outcomes.

Closing Reflection

AI will continue to grow in capability. The question is not whether organisations should use it — they should.

The question is how.

Used as an advisor, AI strengthens decisions. Used as a decision-maker, it weakens accountability.

The organisations that get this right will be the ones that understand: AI works best when humans remain in charge.