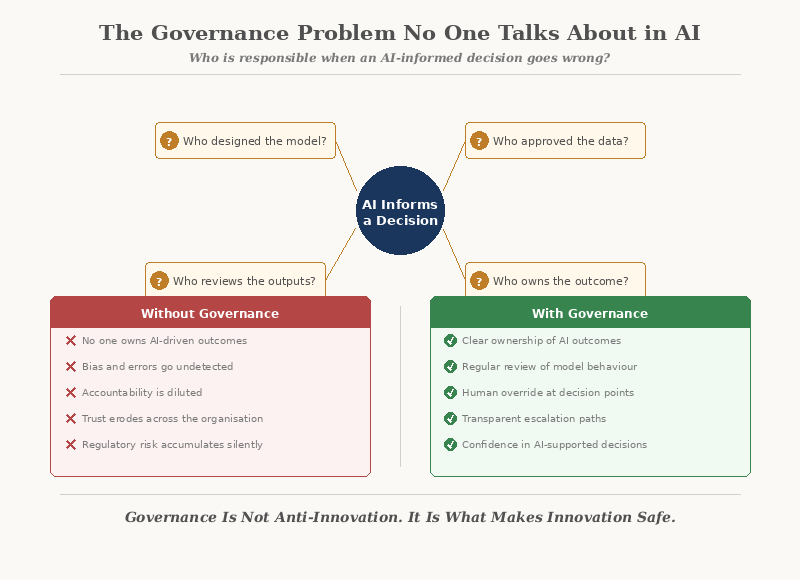

Who is responsible when an AI-informed decision goes wrong? In most organisations, nobody knows. That silence is the governance problem.

AI Is Being Adopted Faster Than It Is Being Governed

AI tools are entering organisations through every door — procurement, marketing, customer service, finance, HR. Teams are experimenting. Vendors are selling. Leaders are encouraging adoption.

But while the technology moves quickly, the governance conversations are not keeping pace.

- Who approved the use of this AI tool?

- What data is it accessing?

- Who reviews its outputs?

- What happens when it produces something wrong?

In most organisations, the answer to these questions is unclear — or entirely absent.

The gap between AI adoption and AI governance is where organisational risk quietly accumulates.

The Accountability Vacuum

Traditional accountability is structured around people and processes. A decision has an owner. A policy has an author. An outcome has someone who answers for it.

AI disrupts this structure. When an AI system contributes to a decision:

Without clear answers, accountability fragments. When something goes wrong, the response becomes a chain of deflection: the team blames the tool, IT blames the vendor, leadership blames the team.

Why Traditional Governance Doesn't Fit

Most governance frameworks were designed for systems that are predictable, rule-based, and transparent. AI is none of these things.

Traditional systems:

- Follow defined rules

- Produce consistent outputs

- Can be fully audited

AI systems:

- Learn from patterns

- Produce probabilistic outputs

- Change behaviour over time

This means governance cannot simply be bolted onto existing frameworks. AI requires a different kind of oversight — one that accounts for uncertainty, learning, and the absence of explainability.

What AI Governance Actually Requires

Effective AI governance is not a policy document filed away. It is a living set of practices embedded into how AI is selected, deployed, and monitored.

At minimum, it requires clarity on five things:

1. Approval

Who decides which AI tools are used, and based on what criteria? Without a clear approval process, AI adoption becomes ungoverned experimentation.

2. Scope

What is the AI being used for — and what is explicitly out of bounds? A tool approved for drafting emails should not quietly be used to assess job candidates.

3. Data

What data does the AI access? Where does it come from? Who ensures it is accurate, current, and appropriate? AI is only as good as the data behind it — and data quality is a governance responsibility.

4. Review

Who checks what the AI produces before it affects people? Automated outputs without human review are unaccountable outputs. Review is not optional — it is the governance mechanism.

5. Ownership

Who is accountable for outcomes that AI influences? Not the AI. Not the vendor. A named person with the authority and responsibility to intervene when things go wrong.

Governance without ownership is theatre. Ownership without governance is recklessness.

The Hidden Risks of Ungoverned AI

When AI operates without governance, the risks are not hypothetical. They are immediate and concrete.

- Sensitive data is processed by tools with unclear privacy practices

- Biased recommendations go unchecked because no one is reviewing outputs

- Inconsistent use across teams creates conflicting results and confusion

- Regulatory exposure increases without anyone being aware

- Trust erodes — internally when staff distrust AI decisions, and externally when clients or customers are affected by errors

None of these risks require a dramatic failure to cause damage. They accumulate quietly, in the background, until they surface in ways that are difficult and expensive to resolve.

Governance Is Not Anti-Innovation

A common objection is that governance slows things down. In practice, the opposite is true.

Without governance:

- Teams duplicate effort because there is no shared approach

- Good ideas stall because leadership is uncertain about risk

- Failures create fear, which leads to blanket restrictions

With governance:

- Teams have clear boundaries within which to experiment safely

- Leadership can support adoption with confidence

- Failures become learning opportunities, not crises

Governance does not limit AI use. It makes AI use sustainable.

Where to Start

Most organisations do not need a comprehensive AI governance framework on day one. They need a starting point.

These are not bureaucratic steps. They are basic operational hygiene for any organisation using AI.

Closing Reflection

AI governance is not a future problem. It is a present one.

Every organisation using AI is already making governance decisions — whether they realise it or not. The question is whether those decisions are intentional or accidental.

The technology will continue to evolve. The accountability structures around it must evolve faster.

Because when an AI-informed decision goes wrong, the first question will always be the same: who was responsible?